Scaling QA for an Open-World RPG Without Scaling the Team

How a mid-size studio used Filuta AI to test hundreds of hours of gameplay in days — with full reproducibility.

The Challenge

A mid-size European studio was in the final stretch of development on an ambitious open-world action RPG — a narrative-driven title with branching storylines, hundreds of quests, dynamic NPC behavior, real-time physics, and a crafting system that interacted with the game economy.

With a fixed release date and a QA team of 15, the studio faced a familiar problem: the game was simply too large to test thoroughly.

- Quest interactions: Over 200 quests with branching paths, many depending on world-state variables that changed based on player choices

- Emergent behavior: Physics, AI, and economy systems interacted in unpredictable ways that scripted tests couldn't anticipate

- Platform coverage: PC, PlayStation, and Xbox builds each required separate validation passes

- Regression risk: Every content patch risked breaking previously stable quest chains or gameplay systems

The studio's QA lead estimated they were covering roughly 20% of meaningful gameplay paths per cycle. The rest was a gamble.

The Approach

Filuta AI deployed autonomous testing agents that played the game like real players — but at machine scale and with systematic intent.

Autonomous Gameplay Exploration

The agents navigated the open world, accepted quests, made dialogue choices, engaged in combat, used the crafting system, and interacted with NPCs — all without predefined scripts. Composite AI's symbolic planning layer ensured systematic coverage of quest trees and world states, while the machine learning layer handled real-time visual interpretation and adaptive gameplay.

Targeted Regression Testing

After each content patch, agents re-explored affected quest chains and gameplay systems, comparing behavior against previous builds to detect regressions. This ran automatically in the CI pipeline — no manual test case updates required.

Edge Case Discovery

The agents deliberately explored unusual player behaviors: sequence-breaking quests, stacking items in unintended ways, triggering NPC interactions during combat, and pushing physics objects into collision boundaries. These are the scenarios human testers rarely try but players inevitably find.

The Results

- Hundreds of hours of gameplay tested across all three platforms — equivalent to what would have taken the full QA team weeks

- 74 critical bugs identified before release, including 12 progression blockers in late-game quest chains that manual QA had not reached

- Automated regression coverage for every content patch — results available within hours of each build

- Full reproduction steps for every defect — exact input sequences, world state, and video captures, eliminating the "works on my machine" problem

What Changed

The studio shipped on schedule — without the last-minute crunch that had defined their previous launches. Post-launch bug reports dropped significantly compared to their prior title, and the day-one patch was the smallest in the studio's history.

The QA team wasn't replaced — they were freed. Instead of spending their days on repetitive playthroughs, they focused on subjective quality: game feel, narrative pacing, difficulty tuning, and player experience. The work that actually requires human judgment.

We went from dreading content-complete milestones to looking forward to them. For the first time, we actually knew what was broken before our players told us.

— QA Director, European Game Studio

How HISD's Procurement Team Scaled Compliance Without Scaling Headcount

The largest school district in Texas partnered with Filuta to automate compliance monitoring, and turned their procurement operation into a model for public-sector efficiency.

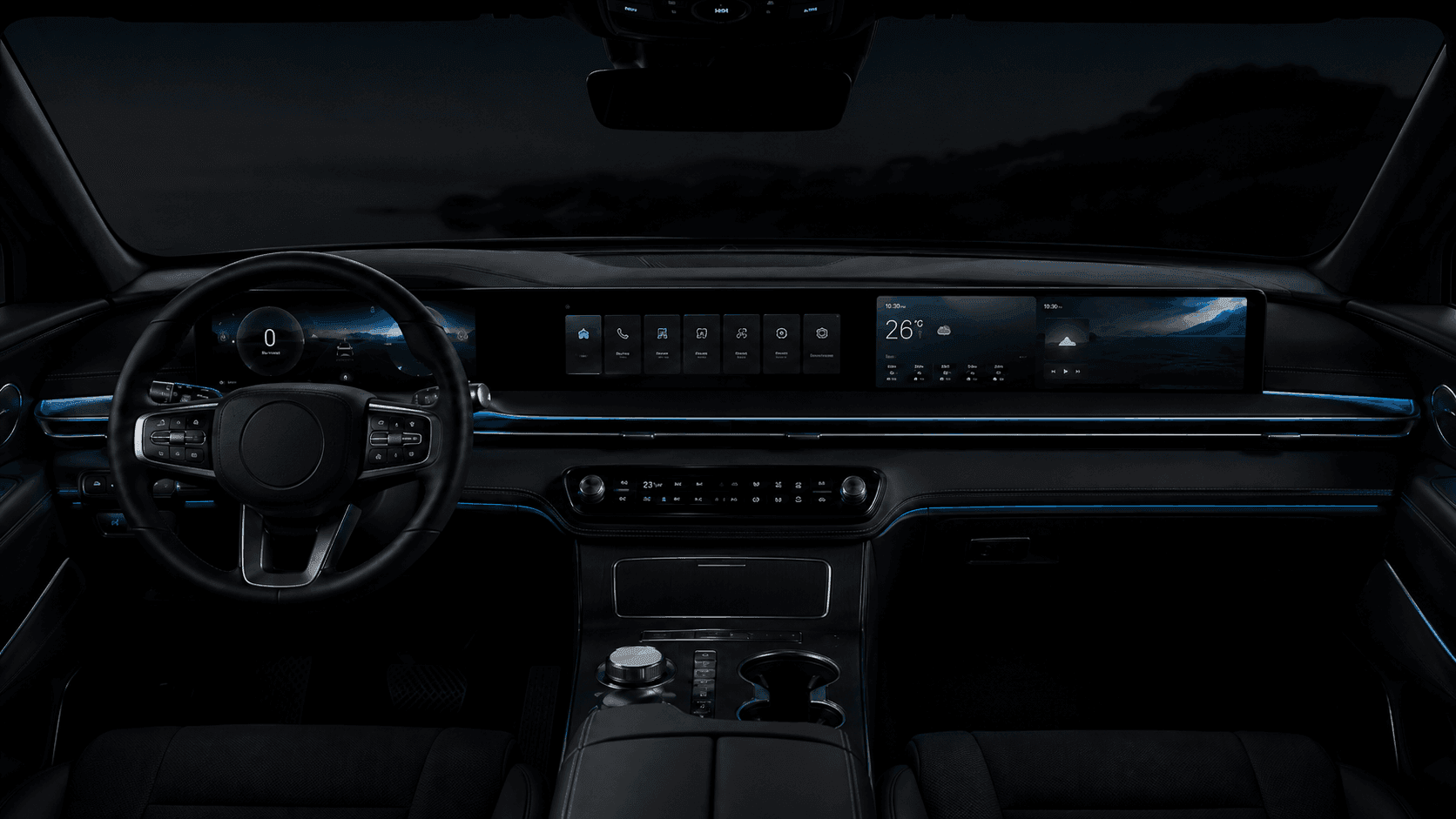

Autonomous HMI Testing for a European Automotive OEM

How Filuta AI's autonomous agents validated a next-generation infotainment system across 38 languages and multiple vehicle platforms.